After much frustration with Windows Live Writer (Beta) and Blogger (Beta) having communications issues, I am now back blogging with Windows Live Writer.

Gotta love trying to get two beta products communicating.

A software developer who noticed one day that all software developers were active bloggers, and was wondering if he was missing out on something......

After much frustration with Windows Live Writer (Beta) and Blogger (Beta) having communications issues, I am now back blogging with Windows Live Writer.

Gotta love trying to get two beta products communicating.

An interesting post on software environmental issues by Mitch Denny caught my attention today, not only because he sent a link around on our internal tech list, but because it's something that I think continually trips up a lot of developers (myself included). Mitch basically argues (and I agree) that development should be done in the same basic environment as the production system, he plays this off against infrastructure people who tend to want to keep a gap between production and development for safety reasons, I mean wo knows what wholes these crazy developers are going to poke in our top-notch security infrastructure.

Most of my experience has been in the shrink wrapped software industry ans as such, there is little point trying to match development and production environments, so in this case I suggest develop on the environment that makes the developers most productive, but then make sure you test, using whatever virtualisation technology you prefer, on ALL platforms in your supported platform matrix.

With enterprise development however, I would say that one should develop in an environment as close as is practicable to the production environment. It never ceases to amaze me the amount of problems that arise because of differences between the development and production environments. Have you ever heard a developer say "gee, it doesn't do that on my machine". The closer you make development environments to the production environment, the less you'll hear this anoying phrase.

The flip side of the coin is that developers need to understand fairly well the differences between their development environment and their production environment. One thing that has bitten me in the past, and a lot of other developers as well is the difference between IIS and the Visual Studio Development Web Server (The web server formerly known as Cassini). There are a number of differneces, and also a number of reasons that developers like to use it over IIS. One major difference which always seems to trip me up is that Cassini passes every requested file through the ASP.Net pipeline, as opposed to IIS which will serve a lot of files normally (ie *.css, *.jpg, *.gif, *.png, *.html, etc...), and only pass the ASP.Net specific files (*.aspx, *.asmx, *.ashx etc...) through to the ASP.Net pipeline. When you first start a project you don't really notice any difference until you think to yourself, "gee, it would be nice to add forms authentication now", and so you put in the standard <deny user="?" /> element and all of a sudden your login page has no graphics and doesn't look anything like how you designed it. This is because Cassini is sending all of you image and css files through the ASP.Net pipeline and they are getting blocked by the Security module because you are not authenticated... yet.

I have spent the morning struggling with Visual Studio. It has been crashing non-stop, but I have got to the bottom of the problem, and am happy to share with the rest of the world.

Visual Studio was happily starting and loading my solution, but whenever I clicked on the test menu and attempted to do something like open the TestView window, VS was crashing. If I tried to open a localtestrun.testrunconfig file it would crash with the following error "Could not open assembly System.Data version 2.0.0.0 .... The system cannot find specified path".

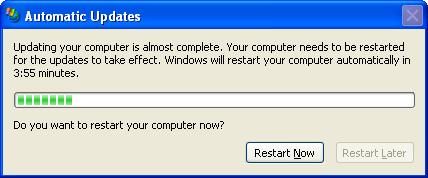

I won't bore you with the process I had to go through to find the resolution, but the problem turned out to be that this morning My machine installed some updates from Windows Automatic Updates. Most of these updates were succesful, but one of them, namely "Security Update for Microsoft .NET Framework, Version 2.0 (KB922770)" had failed with the following error "Error Code: 0x643". after doing a search on this particular error I found the following article http://support.microsoft.com/kb/923100. However, I did not need to follow the resolution outlined there, I simply went to the Windows Update site and manually installed the update.

I hope this helps someone out before they have to spend half a day like I did trying to track it down.

I found a problem today with the PopupControlExtender (Part of the ATLAS Control ToolKit), that occurs when it is embedded inside an EditTemplate for a GridView Control. I suspect the same problem would occur inside any template field, and potentially for other ATLAS Control Toolkit controls.

I wanted to edit a date field inside my EditTemplate, and so I set about implementing something not too dissimlar to the example for the PopupControlExtender on the Atlas Control Toolkit website. Firstly I prototyped it on a page all on its own, no problems everything worked as expected. Then I attempted to place the same code inside my EditTemplate field. At this point when I attempted to go into edit mode I recieved an error message "Assertion Failed unrecognized tag atlascontroltoolkit:popupControlBehavior". Obviously the necessary javascript libraries were not being downloaded when the page rendered. I assumed this was because of the dynamic way in which the EditTemplate renders.

To fix this problem, I simply placed an empty PopupControlExtender onto the design surface of my page. This ensures the necessary javascript files are downloaded, and when the EditTemplate is rendered, the ATLAS engine knows what you're talking about.

I also encountered a coulpe of bugs with the popup control being wrapped inside an update panel. I managed to fix these by simply downloading the latest CTP of the ATLAS Control Toolkit, (now called the "Microsoft ASP.Net Ajax Toolkit"... sorry guys, this is yet another example of how insisting on meaningful naming of products can really kill any excitement and mistique around it, I'm afraid it just doesn't have the same panache as "Ruby On Rails", and if you really want to speak to the buzzword concsious managers of today, you have to break away from boring naming conventions and inject some creativity into the process).

I have to be the slowest blogger in the IT community. Over a week ago I attended the Web Directions conference. The conference was really good, and has inspired me in a few areas. Many other bloggers have had their say on the conference, but I may as well have mine, just for the sake of redundancy.

One of the common themes from both the Accessibility speakers (Gian Sampson-Wild and Derek Featherstone) and the User Experience speaker (Kelly Goto) was user testing, and not just asking the user what they think. Kelly Goto's quote was 'We listen to what the users "didn't say" and observed what they did'. I have also been challenged in the area of accessibility, I think the quote for me came from Derek Featherstone, and that is "The web is Accessible by default, we make it in-accessible". It has inspired me to go have a look at the way I develop, and the bad habits I've gotten into. The truth of the matter is that it is not really that much effort to get into good habbits that make web sites more accessible.

I really liked Jeremy Keith's AJAX sessions. The first session started out a little basic, but become more interesting in the last half. In the second session Jeremy discussed a technique he called "Hijax" which is aimed at ensuring accessibility, and support for down level browsers. I am looking forward to seeing how well I can apply his techniques using ATLAS. While on the topic of libraries, Jeremy did make one statement that I'm not sure I can agree with. He said that he didn't believe in using thrid party libraries for doing AJAX, firstly becasue AJAX wasn't that complicated and secondly because if something goes wrong in the library as a developer you'll need to be able to fix it. I almost agree with his first statement, but even still, I am a big fan in NOT re-inventing the wheel. If there's a library that has a great ranking control for example, and they've coded it so that it works across all browsers in your supported browser matrix, then there is a lot of testing and coding that you can potentially avoid. There are always bugs in any software, and AJAX APIs are no exception, the skill of a good developer is to be able to use an API in such a way that they can workaround any bugs in the underlying API, I've lost count how many times I've had to do this myself. Also, as a Winforms developer, I am extremely greatful that I do not have to write Win32 anymore, and I'm sure those of you who have written Win32 would agree with me. Having said that, his "hijax" mechanism of progressive enhancement is really cool, and my current goal is to go through all the ATLAS controls (the API I'm currently using) and see how I can apply this technique to them.

I went along to John Allsops talk on microformats to hopefully pickup anything I'd missed from the first time I heard it, and was inspired all over again.

One minor thing I think the organisors can improve on is something I saw at the Tech-ed conference, the "re-charge desks". The tech-ed organisors had desks with a series of power boards that people could plug their lap tops into between sessions. This would have been really good for me as my laptop is now a year and a half old, and my battery is showing its age, it wasn't even lasting 2 hours.

Worth mentioning:

- The last speaker of the conference was Mark Pesce (inventor of VRML, explained some of his concepts of social software, and described a project he worked on that attempted to aggregate your social behavior patterns and create social network models simply by using the ability of a blue tooth enabled phone. I thought there’d be some people at readify quite interested in this sort of stuff. http://relationalspace.org/

tags: wd06

I must confess that aslthough I am excited by the whole AJAX phenonenon, and the rich client experience that it provides for the majority of desktop browsers, I sometimes feel concerned about those that for whatever reason aren't able to experience the full beauty of a javascript enabled client. There are two main groups of people that are missing out

So I am always looking for ways to enhance these users experience.

My AJAX toolkit of choice (more through accident than intent) is ATLAS (Microsofts library). ATLAS contains a data enabled control called a ListView which is quite powerful. It works on a client side JavaScript based object model and the best way to get to know it is to read the data section of the ATAS documentation.

So the idea is, get a funky ATLAS based website working, and then attempt to create a version of that website as quickly as possible that can be used by people who can't (or won't) use javascript (similar to what google does with gmail).

I decided to make the sample as simple as possible without loosing anything along the way. I decided to create a Shopping List, that simply contains a quantity (double) and an item (string).

The bueaty of the ListView in ATLAS is that it can use the System.Component model similar to other databinding in ASP.Net 2.0. So the first step in creating a webservice that derives from Microsoft.Web.DataService (refer to the ATLAS documentation for more details). I then create my ListView templates and hook it all up using XML-Script, and pretty it upwith any CSS I may care to add. The result can be seen here (Please forgive me I'm not a graphic artist). Once this is done, I needed to create a Non-ATLAS version of the same page. As my webservice already uses the System.Component model, it was extremely easly to add a GridView control, select an Object DataSource and wire it up, and away it goes. All in all it took me abou 10 minutes to create the NON-Atlas page. It would have taken me another 2 minutes to enable things like edit/delete, but for the moment I wanted to keep it the same as the ATLAS version, this may be the subject of a future post. The only significant difference between the two pages is the presence of a select link on the NON-ATLAS enabled version whereas the ATLAS version manages the select operation simply by handling the mouse click.

Once you have these two pages it is simply a matter of choosing your favourite way to direct users with different broswser capabilities to the correct site.

function MakeRequest(url, timeout,

onCompleteCallback, onTimeoutCallback)

{

var completeCallback = onCompleteCallback;

var timeoutCallback = onTimeoutCallback;

var xmlHttp=createXMLHttpRequest();

if (xmlHttp)

{

xmlHttp.onreadystatechange = function()

{

if (xmlHttp.readyState == 4)

{

window.clearTimeout(timeoutId);

if (xmlHttp.status == 200 ||

xmlHttp.status == 304)

{

completeCallback(

xmlHttp.responseText);

}

}

};

xmlHttp.open("GET",url,true);

var timeoutId =

window.setTimeout(function()

{

if (callInProgress(xmlHttp))

{

xmlHttp.abort();

timeoutCallback('timeout');

}

} , timeout);

xmlHttp.send(null);

}

}

function callInProgress(xmlHttp)

{

switch ( xmlHttp.readyState )

{

case 1, 2, 3:

return true;

break;

// Case 4 and 0

default:

return false;

break;

}

}

function createXMLHttpRequest() {

if(window.XMLHttpRequest) {

try {

xmlHttpRequest = new XMLHttpRequest();

} catch(e) { return null; }

} else if(window.ActiveXObject) {

try {

xmlHttpRequest =

new ActiveXObject("Msxml2.XMLHTTP");

} catch(e) {

try {

xmlHttpRequest =

new ActiveXObject("Microsoft.XMLHTTP");

} catch (e) { return null; }

}

} else return null;

return xmlHttpRequest;

}

public class Handler : IHttpHandler

{

public void ProcessRequest (HttpContext context)

{

System.Threading.Thread.Sleep(6000);

context.Response.ContentType = "text/plain";

context.Response.Write(Guid.NewGuid().ToString());

}

public bool IsReusable

{

get

{

return false;

}

}

}

MakeRequest(

'Handler.ashx?cookie=abcd&another=xyz',

20000, onRequestComplete,

onRequestTimeout);

function onRequestTimeout(response)

{

alert('timeout');

}

function onRequestComplete(response)

{

alert(response);

}